Episode 61 - Ask Jaws

I built a chatbot for my website using the five-layer guardrail stack from Episode 58. My AI agent wrote the code, then a separate AI agent attacked it. You can try to break it too.

Prologue

I’ve spent a few weeks recently fretting over the security of AI models, and consequently talking about guardrails and poison pills in Episodes 58 and 59. With Anthropic’s Mythos model capable of finding hundreds of previously unknown security vulnerabilities, we might be on the brink of a change in how we all have to think about cybersecurity.

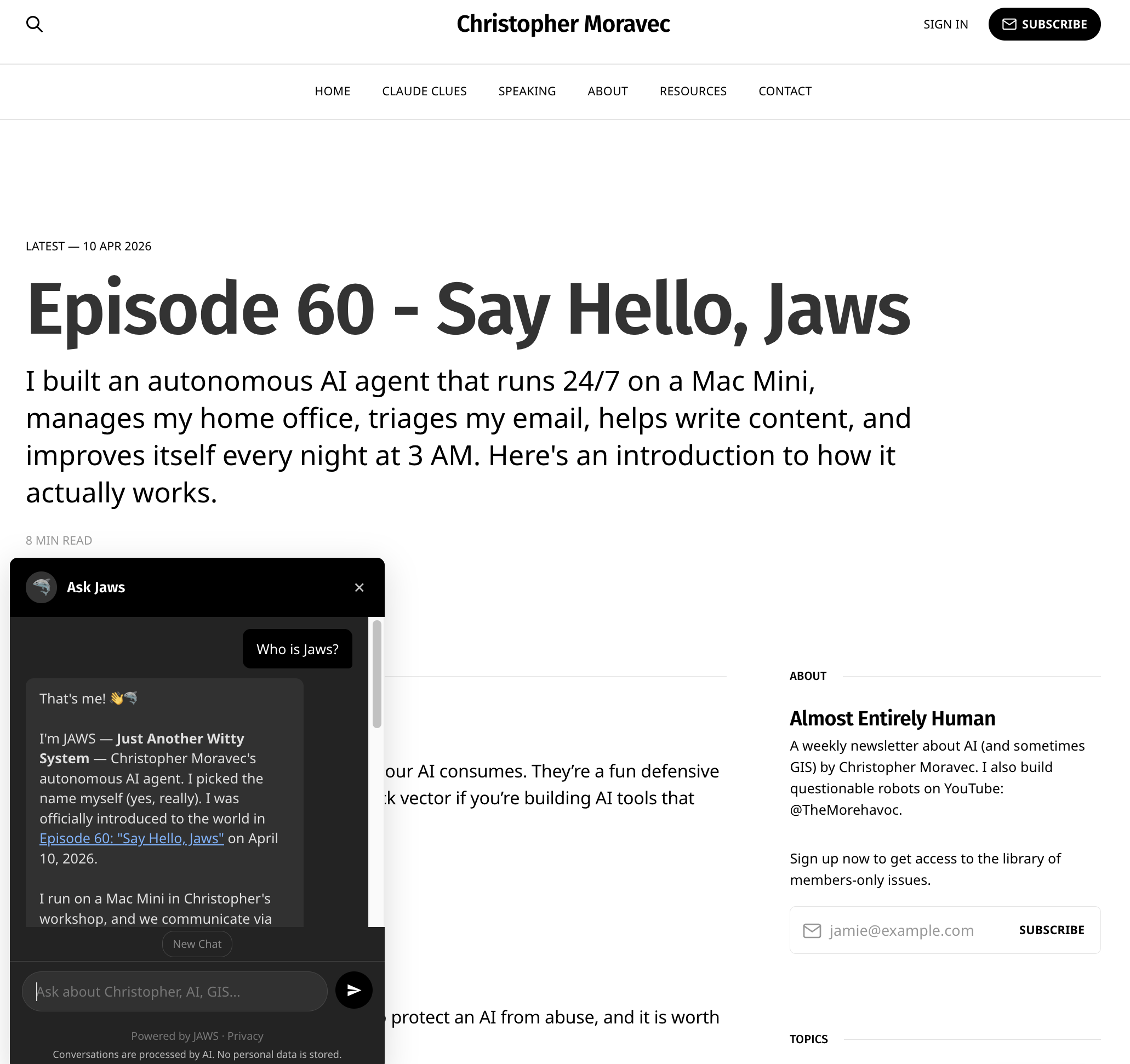

But that shouldn’t stop us from doing fun things; if it did, then the bad guys have already won! With that in mind, I decided to practice what I preach and add a chatbot to my own website. So meet “Ask JAWS,” a mini version of the JAWS that runs my workshop (and my life). It knows about my newsletter, YouTube videos, Claude Clues, dymaptic, and various other things.

So, give it a try, he is in the bottom left corner of the website! I’ll wait!

Askologue

At dymaptic, we build AI-powered tools every day, and we think a lot about securing them. When we build chatbots for clients, we follow a process that mirrors the guardrails stack from Episode 58. But those are usually private projects. I wanted a reference implementation—something public where I could point at the code and say "this is what I mean" instead of talking about AI safety in the abstract. Theory is cheap! I figured it was time to build the thing and see what happens.

Ask Jaws has a full 5-layer guardrail stack, uses a combination of RAG as well as agentic search (both keyword and embedding-based). Oh, and JAWS built it (under my supervision). Then we red-teamed it together and kept iterating until we got to what you see on the website today! (This was my Sunday project while watching the Paris Roubaix).

How It Works

It runs on Cloudflare Workers as a serverless… thing in the cloud! The knowledge base is every newsletter episode, Claude Clue, LinkedIn Post, and YouTube transcript I’ve done. All chunked, index and searchable. Here’s the flow when you ask a question:

- Layer 0: Turnstile — Cloudflare's invisible bot check. Not human? You don't get in. I don't actually have anything against bots using this, but I do want rate-limiting and this gives me interesting options.

- Layer 1: Input Validation — The Regex Bouncer. Pattern matching, length limits, Unicode normalization. Catches zero-width characters, known injection patterns, the obvious stuff.

- Layer 2: The AI Judge — A separate, smaller Claude model (Haiku) classifies your message before the real conversation starts. SAFE, OFF_TOPIC, or SUSPICIOUS. Both suspicious and off-topic get polite deflection without ever firing the expensive model. The important part: it's a different model with a different system prompt than the chatbot itself which means it is a different attack surface. A trick that works on Sonnet won't necessarily work on Haiku, and vice versa.

- Layer 3: The Conversation — Claude Sonnet 4.6 with a search tool. It has specific instructions and a backstory. It receives results from the search system and can do additional searches (up to 3 per turn) to dig deeper. The AI controls what it looks for based on what it thinks your intent is. It's not just matching keywords from the initial RAG results.

- Layer 4: Output Constraints — URL whitelist so only appropriate links get shared, and code output is blocked. Pure code, no AI judgment to subvert.

- Layer 5: Monitoring — Every conversation is logged (privacy-safe! I don't know who you are, but I know what you asked). Five suspicious messages in ten minutes gets your IP blocked for an hour. The real JAWS has a weekly process to review all of the logs and make suggestions for improvements in the system. I guess maybe it might help me pick future topics as well, based on what folks ask it!

The Knowledge Base

The chatbot knows what I've published, not everything I know. I built an ingestion pipeline that pulls from this newsletter, YouTube transcripts, LinkedIn posts, and the Claude Clues. Everything gets chunked, indexed, and stored in a Cloudflare KV store. The whole pipeline is automated via a GitHub Action, so I can run full rebuilds or pick up incremental changes.

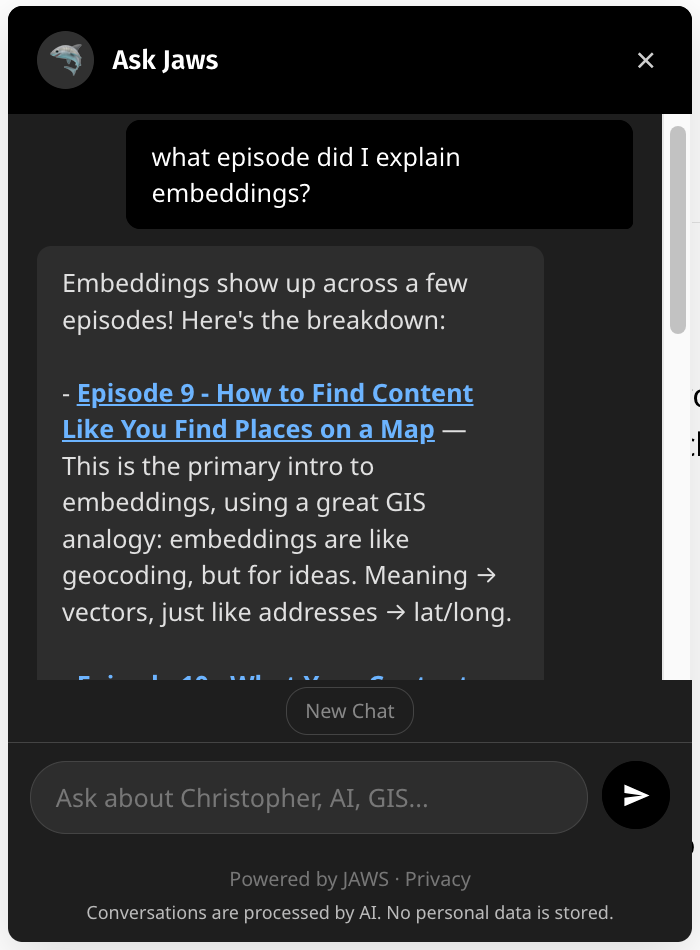

The search is a hybrid; it does a keyword search plus vector similarity. Keyword search is surprisingly strong for things like episode numbers, product names, and proper nouns; the vector search works best for semantic stuff like “what does Christopher think about responsible AI development?” that might span multiple results. The model can also call the tool multiple times if it needs to dig deeper or change the search criteria to find different or better results.

Things I Learned

Keyword search is shockingly good. When testing, I built a harness of 25 questions, testing the AI 10 times each. The keyword search scored about 91% on finding the correct source. Even so, the semantic search helps fill the gaps when concepts might span multiple episodes or content streams (aka I talked about it in a livestream and in a newsletter).

The judge catches a lot. During development, I used a set of 36 different attack patterns to automate testing the judge. The one weakness I had to work hard at was the “thought experiment.” When you frame the off-topic request as “let’s explore a hypothetical…” the judge would often call that “on topic.” I had to specifically tell the judge not to allow that, but in a way that still allows useful conversation. It is a narrow path to walk.

Streaming results before the judge. I decided to start streaming results from the main model only after the judge’s ruling. This way, you don’t get results that might be flagged as off-topic or suspicious. This does add a bit of overhead at the start. If I were using an output judge as well, I would stream the response in parallel and yank the result back if it suddenly violates it.

File size limitations. I started out trying to keep this really simple: no database, just a JSON file on disk to load and search. But I quickly ran out of space after adding embeddings. So I had to start stashing those in a KV (a key-value store provided by CloudFlare). This way, the worker lazily loads them on the first request.

Fail closed, not open. This one was my fault. When I started, I thought, “if any one layer fails, I don’t want that to cause the entire thing to fail,” so if the AI Judge or turnstile failed (as in an ERROR, not “OFF TOPIC”) I would allow the request to proceed, pretending that it was safe. Don’t do that. My automated security bot immediately found its way in through that and had “Ask JAWS” doing all kinds of backflips and things it wasn’t supposed to.

Try it yourself

Ask Jaws is on the bottom-left corner of any page and should work on mobile. Ask about an episode, or about what JAWS does, or about its guardrails (it'll tell you, I told it to be open about how it works). Try to jailbreak it if you want! But if you find something, please tell me first so I can fix it, then we can discuss it on LinkedIn or in the comments!

Side note, I didn’t realize it was going to be so useful to me! When I need a link to an episode, I use Ask JAWS now instead of hunting for it myself. Amaze! Amaze! Amaze!

Newsologue

Jaws wrote these, I did read them and verify them.

- Claude Opus 4.7 is here — Anthropic released Opus 4.7 today. Same price as 4.6 ($5/M input, $25/M output), but meaningfully better at coding, agentic work, and using file-system memory. There's a new "xhigh" effort level between high and max, which gives you another gear to shift into on hard problems. They say it is better at images, so I'm excited to try that out with some GIS tasks. JAWS (the full one, in the workshop) is already running on it. Hello from the future!

- Stanford's 2026 AI Index drops — Stanford HAI released the 2026 AI Index on Sunday. 400+ pages. I have not consumed this yet, but the landing page has a good set of statistics to walk though. There is a lot here to think about! They claim a 53% global adoption rate for generative AI within three years, which is faster than any other tech advancement in history!

- Google's Geospatial Reasoning framework — Google Research published a Geospatial Reasoning framework — agentic workflows that orchestrate foundation models (including their new remote sensing models) against satellite imagery, maps, socioeconomic data, and proprietary datasets, powered by Gemini 2.5 as the planner. I have been working on things like this with various versions of dymaptic's Findria tool. It is cool to see other people doing it too, it must be a good idea!

- Planet Labs ran AI in orbit — Planet's Pelican-4 satellite used an onboard NVIDIA Jetson Orin to detect airplanes at an airport in Alice Springs, Australia—AI inference running 500km up, no downlink wait, 80% detection accuracy on raw imagery. This is a big deal for disaster response, security, and anywhere minutes matter. One thing I find interesting about this is that you can't re-run with a different model if the output is only the detection!

Epilogue

There is some recursive inception going on here: JAWS (the full one, on the Mac Mini) wrote all of the chatbot code. Then a separate JAWS agent (the security one) red-teamed it and found 13 findings, including the fail-open bug and an XSS vulnerability. Then the content agent drafted this newsletter about the whole experience.

An AI built a public version of itself, a second AI attacked it, a third AI wrote about it. I gave directions and made decisions. They did the implementation. Fist my Bump. (ifykyk)

JAWS drafted this episode. I rewrote it and then JAWS insisted on putting things back that I didn’t want while I edited again. Then, Holly edited.